Why Build a PDF Chatbot?

Extracting information from long PDF documents — research papers, technical manuals, business reports — can be time-consuming. Instead of manually scanning pages, imagine simply asking:

“What are the key findings?”

“Summarize section 3.”

“Who is the author?”

This application solves that problem by integrating:

Streamlit — for building an interactive web UI

LangChain — for managing LLM workflows

FAISS — for efficient similarity search

Google Generative AI — for embeddings + Gemini model access

Gemini 2.0 Flash — for conversational responses

Together, these tools create a Retrieval-Augmented Generation (RAG) system.

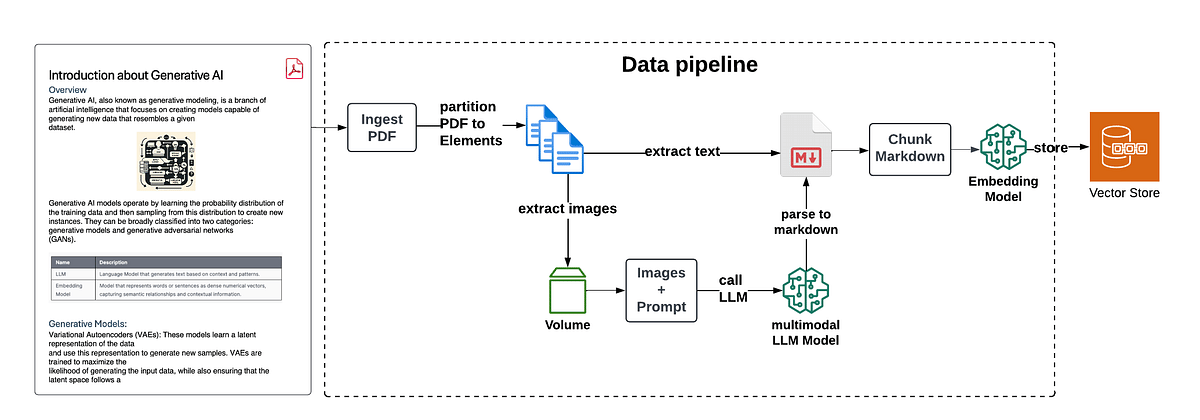

How the System Works (Architecture Overview)

Step-by-Step Flow:

Upload PDF

Extract text

Split text into chunks

Convert chunks into embeddings

Store embeddings in FAISS

User asks a question

Relevant chunks are retrieved

Gemini generates a context-aware answer

Code Walkthrough

1️⃣ Setting Up the Environment

We load environment variables and configure access to Google’s Generative AI services.

from dotenv import load_dotenv

import os

from langchain_google_genai import GoogleGenerativeAIEmbeddings

import google.generativeai as genai

load_dotenv()

genai.configure(api_key=os.getenv("GOOGLE_API_KEY"))This ensures secure API key management using a .env file.

2️⃣ Extracting Text from PDFs

We use PyPDF2 to read and extract text from uploaded files.

from PyPDF2 import PdfReader

def get_pdf_text(pdf_docs):

text = ""

for pdf in pdf_docs:

pdf_reader = PdfReader(pdf)

for page in pdf_reader.pages:

text += page.extract_text()

return text3️⃣ Splitting Text into Chunks

Large text blocks are inefficient for embedding. So we divide them into smaller overlapping chunks.

from langchain.text_splitter import RecursiveCharacterTextSplitter

def get_text_chunks(text):

splitter = RecursiveCharacterTextSplitter(

chunk_size=10000,

chunk_overlap=1000

)

return splitter.split_text(text)This improves search relevance and retrieval accuracy.

4️⃣ Creating a FAISS Vector Store

Each chunk is converted into embeddings using Google’s embedding model and stored in FAISS.

from langchain.vectorstores import FAISS

from langchain_google_genai import GoogleGenerativeAIEmbeddings

def get_vector_store(text_chunks):

embeddings = GoogleGenerativeAIEmbeddings(model="models/embedding-001")

vector_store = FAISS.from_texts(text_chunks, embedding=embeddings)

vector_store.save_local("faiss_index")Now the document is searchable using semantic similarity.

5️⃣ Building the Conversational Chain

We configure Gemini 2.0 Flash with a structured prompt to ensure grounded answers.

from langchain.prompts import PromptTemplate

from langchain.chains.question_answering import load_qa_chain

from langchain_google_genai import ChatGoogleGenerativeAI

def get_conversational_chain():

prompt_template = """

Answer the question as detailed as possible from the provided context.

If the answer is not in the context, say:

"answer is not available in the context."

Context:

{context}

Question:

{question}

Answer:

"""

model = ChatGoogleGenerativeAI(

model="gemini-2.0-flash-exp",

temperature=0.3

)

prompt = PromptTemplate(

template=prompt_template,

input_variables=["context", "question"]

)

chain = load_qa_chain(model, chain_type="stuff", prompt=prompt)

return chainThis ensures factual and context-aware responses.

6️⃣ Handling User Queries

When a question is asked:

Load FAISS index

Perform similarity search

Pass results to Gemini

Display answer

def user_input(user_question):

embeddings = GoogleGenerativeAIEmbeddings(model="models/embedding-001")

new_db = FAISS.load_local(

"faiss_index",

embeddings,

allow_dangerous_deserialization=True

)

docs = new_db.similarity_search(user_question)

chain = get_conversational_chain()

response = chain(

{"input_documents": docs, "question": user_question},

return_only_outputs=True

)

st.markdown(f"### Reply:\n{response['output_text']}")7️⃣ Building the Streamlit Interface

Streamlit provides:

PDF upload sidebar

Processing button

Chat input field

Response display

Run the app using:

streamlit run chat_pdf.pyKey Benefits of This Approach

✅ Turns static PDFs into interactive chat experiences

✅ Saves time when reviewing large documents

✅ Uses advanced AI + vector search

✅ Scalable and production-ready foundation

✅ Demonstrates a real-world RAG implementation

Final Thoughts

This project highlights how modern AI tools can transform traditional document interaction.

By combining:

Semantic search (FAISS)

Embeddings

LLMs like Gemini 2.0 Flash

An interactive Streamlit UI

We create a powerful system that enables natural, conversational knowledge extraction from PDFs.

This architecture can be extended to:

Legal document assistants

Research summarizers

Business intelligence tools

Educational study assistants