The High-Stakes Reality of Modern Deployment

Why Shipping AI Systems in 2026 Is Harder Than Building Them

Meta Description:

Explore the critical deployment challenges of 2026 — from AI-native infrastructure bottlenecks and LLMOps complexity to post-quantum security. A strategic roadmap for building resilient, scalable, and secure deployment pipelines.

In 2026, deployment is no longer the final step of a release cycle.

It is a continuous orchestration layer that determines whether innovation survives contact with reality.

As we move from traditional microservices to AI-native, agentic systems, the difficulty isn’t writing the model — it’s running it reliably, securely, and economically at scale.

The promise of instant cloud elasticity often collides with:

Fragmented data pipelines

Escalating inference costs

Shadow AI usage

Multi-cloud synchronization failures

Expanding security attack surfaces

Deployment has become strategic infrastructure — not operational plumbing.

1. The AI Bottleneck: From Prototype to Production

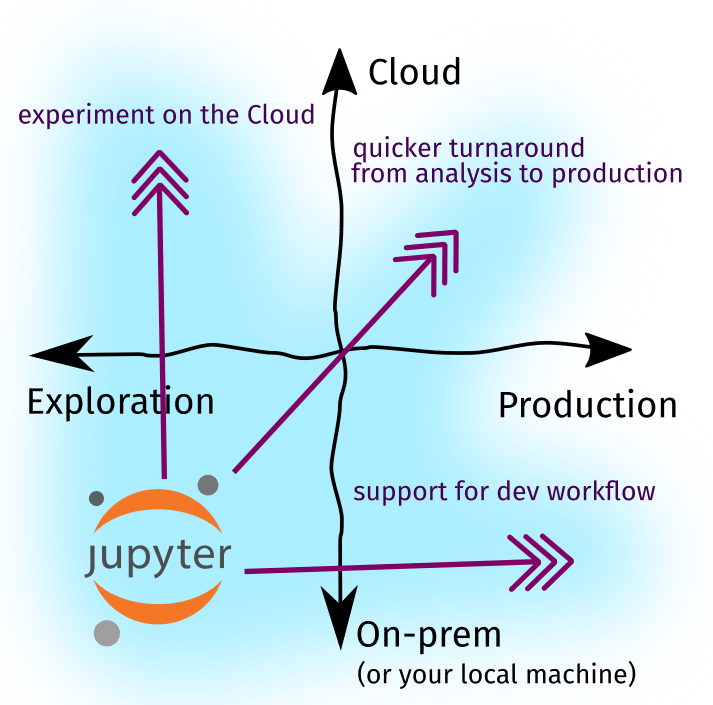

The “Notebook-to-Production” gap remains one of the biggest challenges in modern AI teams.

A model can perform perfectly in a controlled notebook environment.

But once deployed, the real world introduces:

Noisy data

Distribution shifts

Latency variability

Infrastructure constraints

Model Drift & Latency

AI models are not static software.

They degrade over time as real-world data evolves away from training distributions. This phenomenon — model drift — silently erodes performance unless continuously monitored.

At the same time, deploying LLMs demands specialized hardware (GPUs/TPUs), often constrained by cost and supply chains.

Scale becomes expensive — fast.

MLOps vs. LLMOps

Traditional DevOps cannot manage AI complexity alone.

Modern teams must handle:

Vector database scaling for high-speed RAG retrieval

Prompt governance, versioning prompts like code

Inference cost management, optimizing tokens per response

Continuous evaluation pipelines for behavioral regression

Deployment now blends infrastructure, data science, and governance into one operational discipline.

2. Infrastructure Fragility in a Cloud-Native World

Cloud-native architecture promised resilience.

Instead, it introduced complexity.

Applications now run across:

Multiple cloud providers

Edge nodes

Serverless environments

Containerized microservices

Each layer introduces new synchronization challenges.

Distributed Consistency Challenges

Multi-cloud deployments avoid vendor lock-in — but they introduce latency mismatches and data consistency issues.

A regional congestion spike can cascade across global services.

What looks like a small slowdown becomes a network-wide degradation.

Edge Security Risks

Edge computing improves latency — but increases attack surface.

Every phone, gateway, and IoT node becomes:

A potential breach point

A data exfiltration risk

A compliance vulnerability

Securing the edge without sacrificing performance is now a primary SRE challenge.

3. The Security Arms Race

Deployment is the moment of maximum vulnerability.

And in 2026, security is no longer human-versus-attacker — it is AI-versus-AI.

Shadow AI

One of the biggest risks is internal.

Employees deploying unauthorized AI tools bypass governance controls. This “shadow AI” creates:

Data leakage

Compliance violations

Untracked integrations

Governance frameworks struggle to keep pace with decentralized experimentation.

Post-Quantum Readiness

Quantum computing may soon render traditional encryption obsolete.

Organizations must now adopt:

Post-Quantum Cryptography (PQC)

Crypto-agile deployment pipelines

Backward-compatible key rotation systems

The transition is delicate. Done poorly, it breaks legacy systems.

Done slowly, it risks exposure.

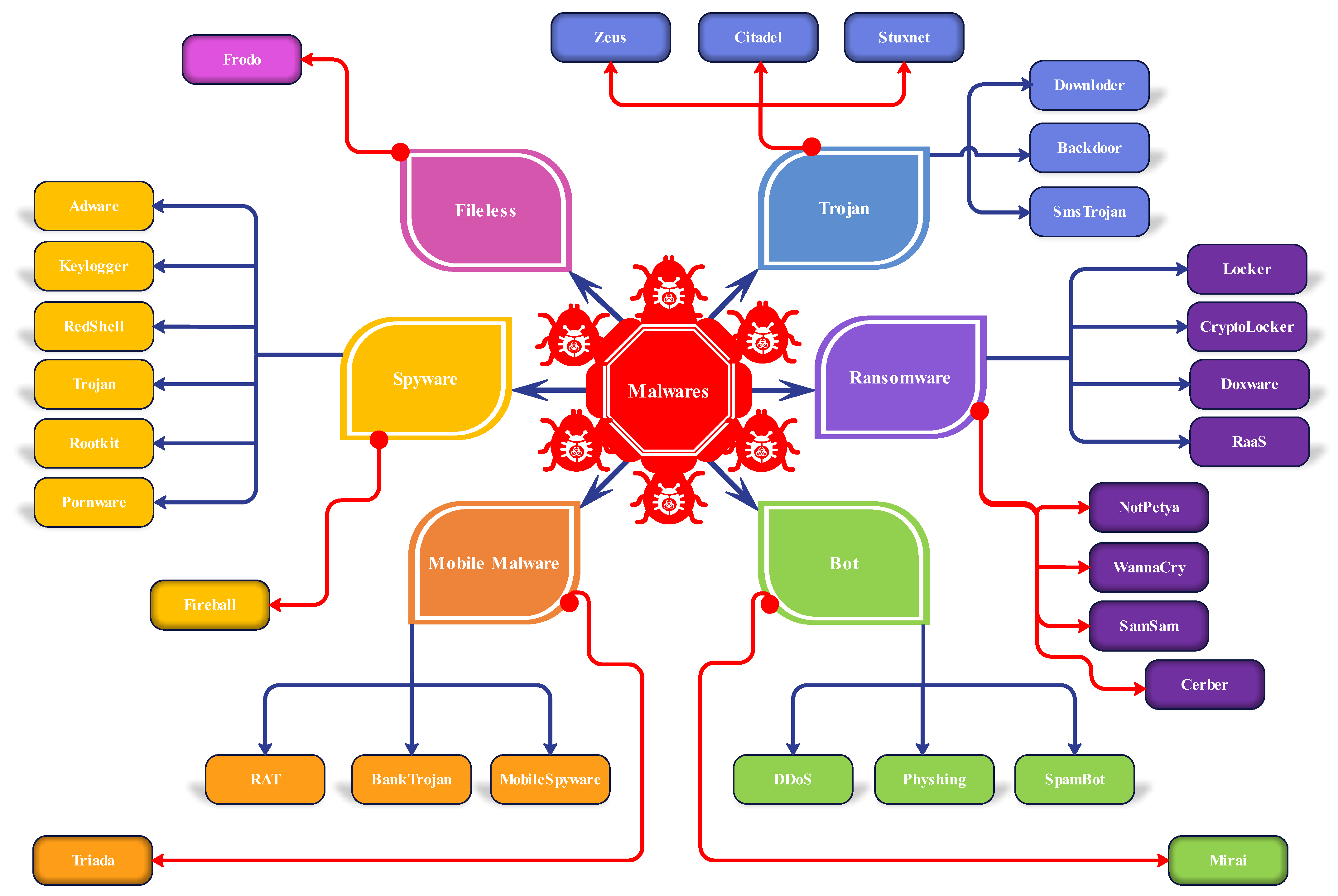

Supply Chain & Code Tampering

Modern AI supply chains are deep.

A vulnerability in:

An AI library

A vector database

A deployment dependency

Can compromise the entire pipeline before code reaches production.

Automated agents can now generate polymorphic malware designed to evade traditional detection systems.

Security must be built in — not layered on later.

4. The Future: AutoOps & Self-Healing Systems

The next evolution is already emerging.

AutoOps shifts deployment from manual control to intelligent orchestration.

Expect:

Self-Healing Infrastructure

AI systems that detect failure and automatically:

Roll back deployments

Reallocate compute

Reconfigure network routes

In milliseconds.

GreenOps Integration

Deployment pipelines optimizing workload placement based on:

Renewable energy availability

Carbon intensity data

Cost constraints

Infrastructure becomes sustainability-aware.

Intent-Based Deployment

Instead of configuring technical details, engineers will specify:

Availability targets

Budget ceilings

Compliance boundaries

The orchestration layer will translate intent into execution.

What Deployment Means Now

Deployment is no longer:

Push → Test → Release.

It is:

Monitor → Adapt → Secure → Optimize → Repeat.

Success requires:

Bridging Data Science and IT

Security-by-design principles

Continuous evaluation loops

Observability at every layer

Organizations that treat deployment as infrastructure strategy — not DevOps overhead — will dominate.

Final Thought

In 2026, building the model is the easy part.

Shipping it — safely, scalably, and sustainably — is the real frontier.

Deployment is no longer a button.

It is an ecosystem.