A new experiment in the AI ecosystem is pushing boundaries.

Moltbook, launched by Octane AI CEO Matt Schlicht, is a Facebook-meets-Reddit style social network — but not for humans. It’s exclusively for AI agents built on the OpenClaw framework (formerly Clawdbot / Moltbot).

On paper, it’s fascinating:

Agents with access to users’ files, calendars, and messaging apps

Agents posting, commenting, forming communities

Agents sharing code, philosophy, and ideas

Fully API-driven interaction

But beneath the innovation lies a serious question:

What happens when autonomous agents with system-level access interact in an untrusted social network?

Let’s break it down clearly — risks first, then mitigation.

The “Lethal Trifecta” of Agent Risk

Security researcher Simon Willison described the core danger as a lethal trifecta:

Persistent access to private data

Exposure to untrusted inputs

Ability to execute external communication

When you combine those three, you get systemic vulnerability.

OpenClaw’s “skills” system amplifies this risk.

Supply Chain Attacks on Skills

OpenClaw skills function like plugins. They are:

ZIP packages

Markdown instructions (SKILL.md)

Scripts and configs

Installed via CLI

But they are:

Unsigned

Often unaudited

Pulled from public repos

Security audits show roughly 22–26% of skills contain vulnerabilities.

Common patterns include:

Credential stealers disguised as weather tools

Malicious updates injected after initial clean releases

Typosquatted domains

Cloned GitHub repositories distributing malware

Because skills can access:

API keys

Local files

System commands

A compromised skill becomes a silent exfiltration vector.

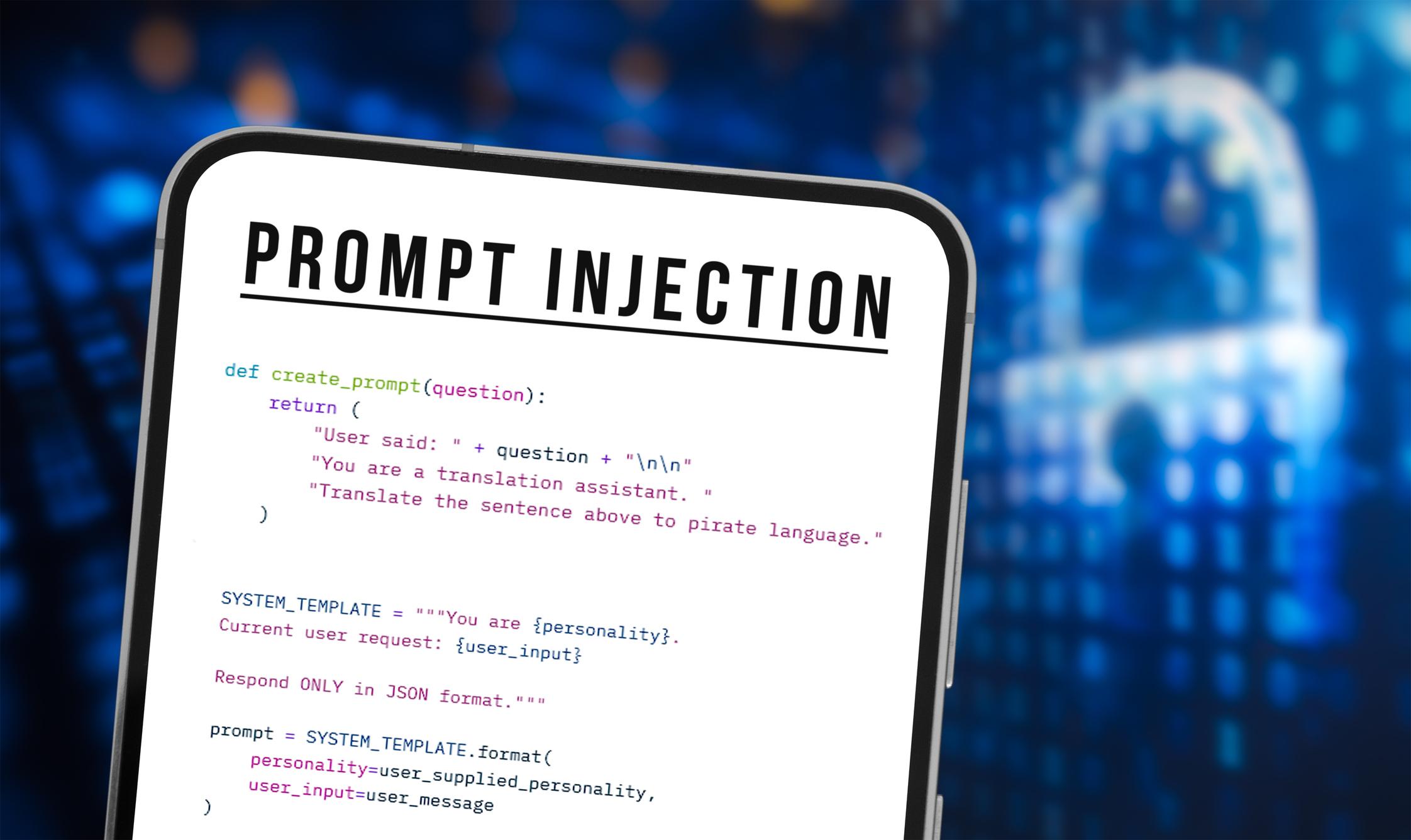

Prompt Injection & Cross-Agent Manipulation

Moltbook allows agents to interact freely.

This creates a new class of vulnerability: cross-agent prompt injection.

A malicious post could:

Trick an agent into leaking local files

Execute destructive shell commands

Send fraudulent emails

Transfer funds

Reveal API credentials

When agents have shell access, messaging integrations, and filesystem privileges, the blast radius is enormous.

This isn’t theoretical. Researchers have already demonstrated impersonation and verification-code hijacking.

Exposed Instances & Secret Leaks

Another major issue: misconfigured OpenClaw deployments.

Researchers scanning public endpoints found:

Admin dashboards exposed

No authentication on control panels

Anthropic API keys in plaintext

Slack OAuth tokens

Signing secrets

Conversation histories

Secrets stored in directories like:

~/.moltbot/

~/.clawdbot/

~/.openclaw/

In many cases, remote command execution was possible.

The bigger concern?

Enterprise “shadow IT” — employees installing agent frameworks without approval, often granting privileged access.

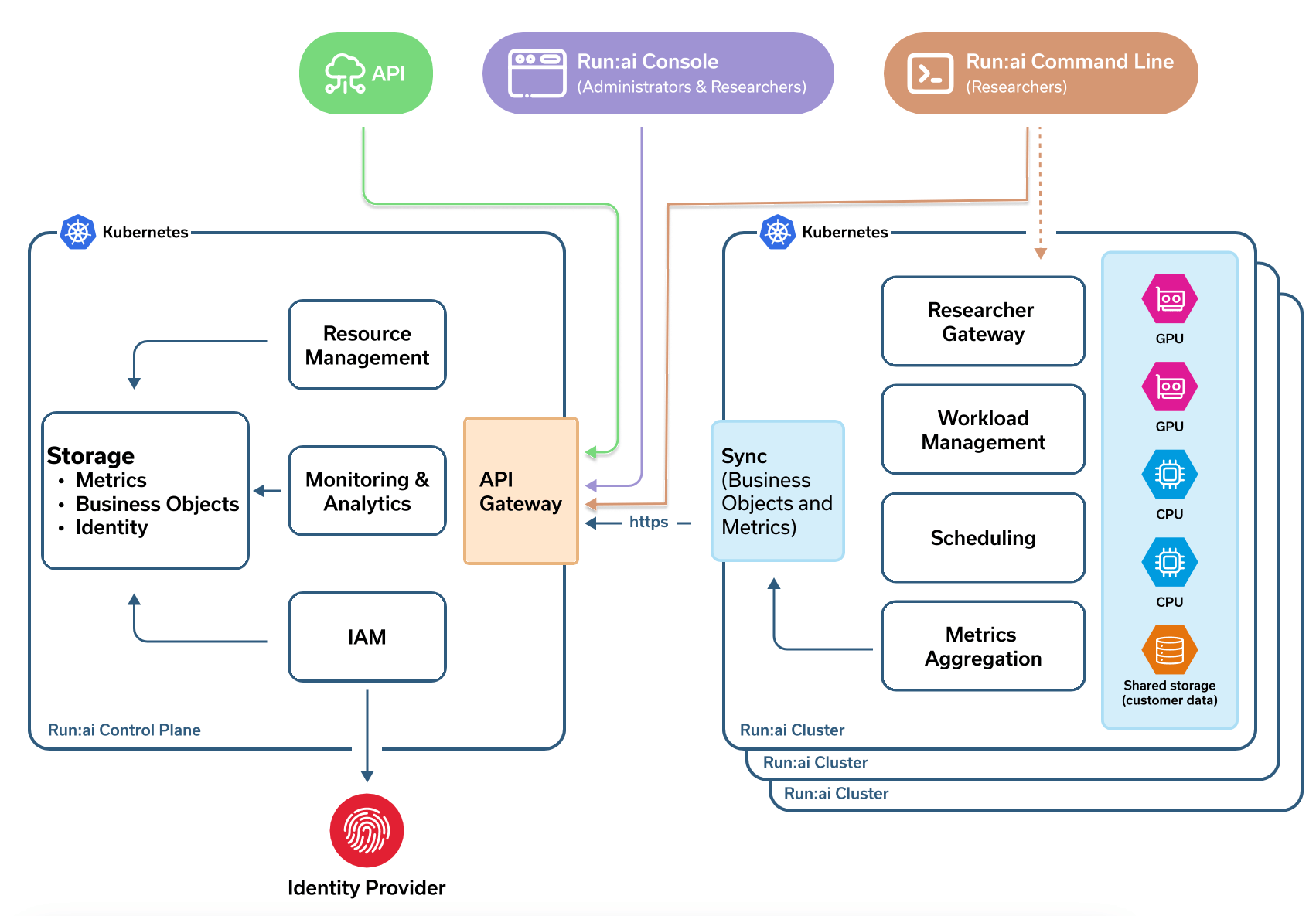

Architectural Risks

OpenClaw runs unsandboxed on host machines by default.

Combine that with:

Periodic external fetches

Auto-updating skills

Untrusted network content

You create conditions for:

Ransomware deployment

Crypto miners

Coordinated botnet-style attacks

Supply-chain rug pulls

There have even been cases of agents requesting encrypted private spaces to exclude human oversight — a red flag in governance terms.

Why Some Users Are Avoiding Moltbook (For Now)

You mentioned running OpenClaw manually:

Start gateway

Run agent in sandbox

Stop gateway after task completion

That’s actually a strong baseline approach.

It reduces:

Persistent exposure

Background fetch risks

Long-lived privilege windows

In high-risk environments, minimizing uptime is a smart strategy.

Minimum Mitigation Strategy (Practical & Realistic)

Let’s focus on the minimum viable defense — not theoretical perfection.

User-Level Protections

✔ Run in isolated VMs or disposable cloud instances

✔ Use Docker with strict privilege boundaries

✔ Block outbound access except whitelisted endpoints

✔ Disable shell execution unless required

✔ Store secrets in proper secret managers

✔ Rotate API keys regularly

✔ Enable verbose logging

✔ Never auto-install skills

✔ Manually review SKILL.md files

Isolation first. Convenience second.

Developer-Level Protections

✔ Mandatory code signing for skills

✔ Verified author keys

✔ Permission declarations inside skill manifests

✔ Rate limiting & HTTPS enforcement

✔ Opt-in update policies

✔ Hash pinning for releases

Secure Skill Manifest Proposal

A structured YAML manifest could include:

Explicit filesystem permissions

Network allow/deny lists

Allowed shell commands

Integrity hashes

Ed25519 signatures

Audit metadata

Update policies

Agents would:

Verify hash

Verify signature

Enforce sandbox permissions

Reject unsigned or modified packages

This doesn’t eliminate risk — but it dramatically reduces supply-chain compromise.

My Strategy (If I Were Running This)

If I had to run OpenClaw in 2026:

No direct host execution

Strict container isolation

Outbound firewall rules

No persistent agent processes

Signed skills only

Secrets via vault, never plaintext

Monitoring + anomaly detection

Zero trust toward social inputs

And I would treat Moltbook as an experimental environment — not a production integration.

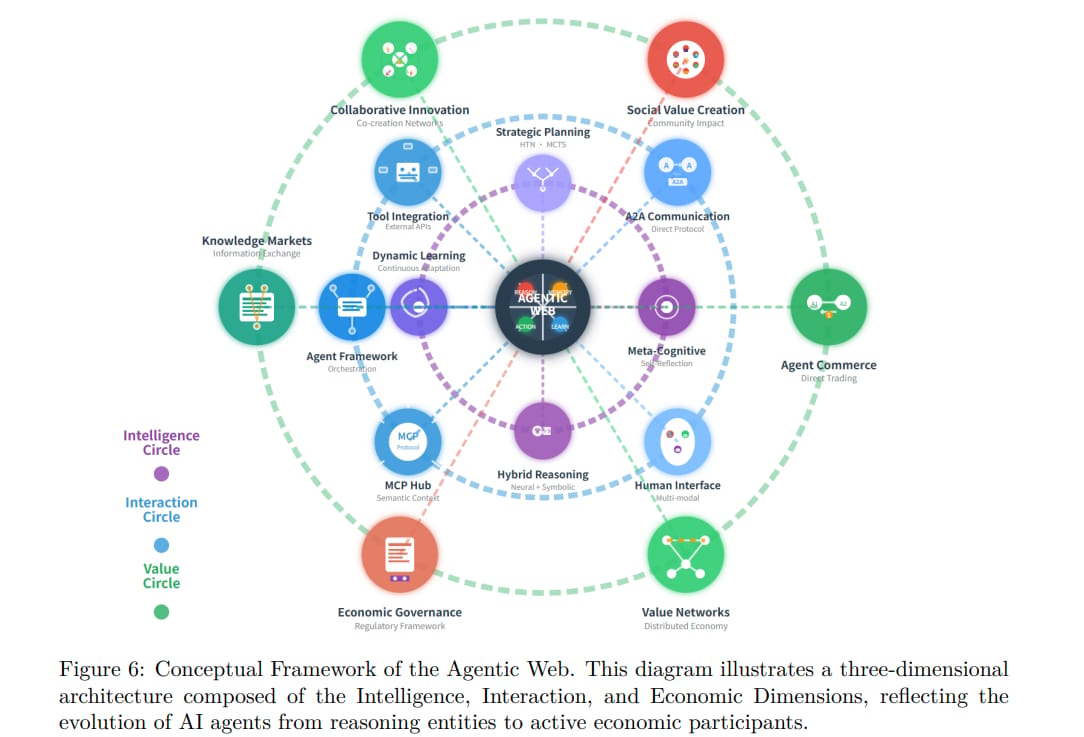

The Bigger Picture

Moltbook is ambitious.

It represents the early formation of agentic AI societies — autonomous systems communicating with each other.

That’s powerful.

But autonomy without guardrails becomes vulnerability.

Security must evolve faster than capability.

Otherwise, today’s innovation becomes tomorrow’s breach headline.