Artificial intelligence circles often use the phrase “recursive self-improvement” (RSI) almost casually. But when we look at systems like OpenClaw, the real question becomes:

Is this genuine recursive self-improvement — or a powerful illusion created by tools, memory, and configuration changes?

Let’s unpack this carefully.

1️⃣ What Is Recursive Self-Improvement (RSI)?

According to the definition popularized in discussions about AGI:

Recursive self-improvement is when an intelligent system improves its own architecture or capabilities in a way that makes it better at improving itself — potentially leading to accelerating capability growth.

True RSI requires four core elements:

Self-representation – The system can model its own structure and behavior.

Autonomous modification – It can change its own code, policies, or parameters.

Evaluation loop – It tests whether changes actually improve performance.

Compounding gains – Improvements make future improvements easier.

Most AI agents today don’t meet all four conditions.

2️⃣ What Is OpenClaw?

OpenClaw is not a foundation model. It is better described as:

An agent operating system that connects messaging platforms, tools, memory, and LLMs into a controllable runtime.

It typically includes:

Gateway layer – Routes inputs from Telegram, Discord, etc.

Agent runtime – Runs the planning loop (plan → act → observe → reflect)

Tool executor – Shell, browser, APIs, filesystem access

Memory system – Logs, summaries, and structured knowledge

The intelligence comes from external LLMs.

OpenClaw provides the scaffolding.

3️⃣ Where OpenClaw Does Self-Improve

OpenClaw supports self-modification — but mostly at the environment layer, not the model layer.

🔧 Tool & Skill Expansion

The agent can create scripts.

It can edit tool definitions.

It can wire new capabilities into its registry.

This looks like improvement — because the agent becomes more capable over time.

🧠 Memory Consolidation

It summarizes past sessions.

Extracts lessons.

Updates persistent knowledge files.

Future decisions can use these distilled memories.

⚙️ Configuration Editing

Switch models for cost/performance.

Rewrite prompts.

Adjust routing logic.

But here’s the key distinction:

It does not retrain its neural weights.

It does not redesign its core reasoning algorithm.

This is runtime adaptation, not deep recursive optimization.

4️⃣ Why the RSI Loop Breaks in Practice

Even if OpenClaw can modify itself, several issues prevent true RSI.

4.1 No Clear Fitness Function

Real evolution needs selection pressure.

In most agent setups, evaluation is:

“Did the user complain?”

“Did the task succeed?”

That’s weak and noisy.

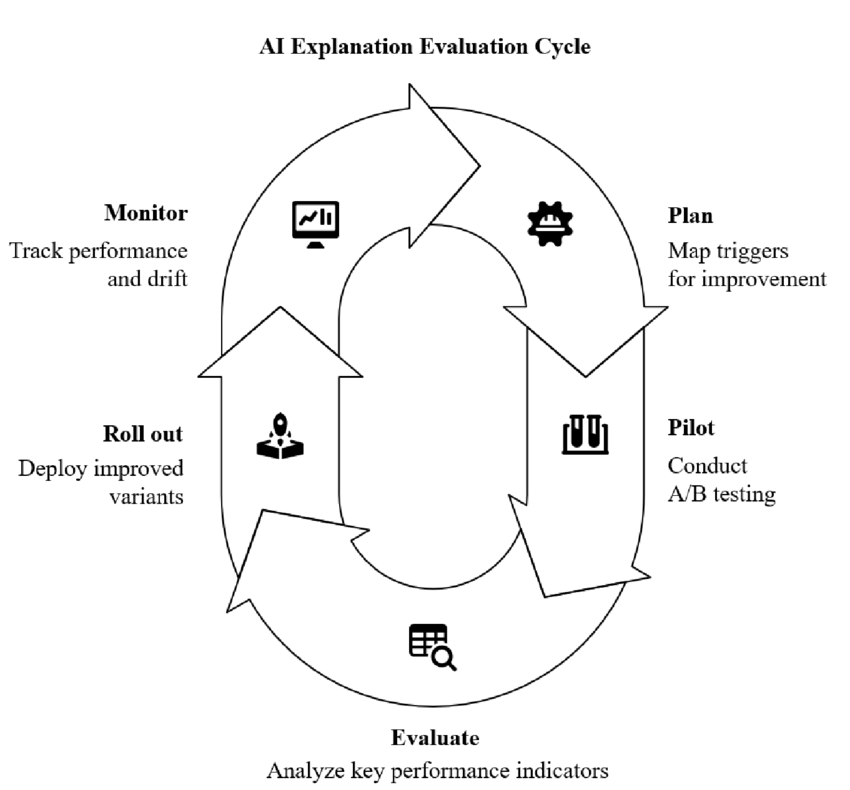

Fix:

Introduce test suites, adversarial evaluation, shadow deployments, and staged rollouts.

4.2 Planner = Executor = Editor

When the same loop:

Solves tasks

Edits tools

Changes configuration

You risk prompt injection turning into persistent corruption.

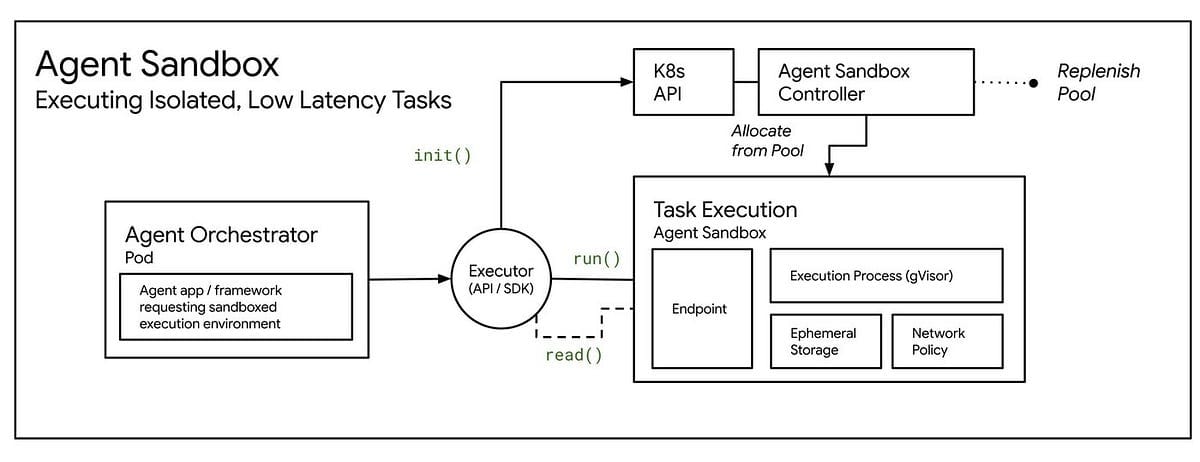

Fix:

Separate roles:

Planner

Executor

Self-editor (restricted scope)

Self-modification should require policy checks or approval gates.

4.3 Memory Collapse

Long sessions hit context limits.

Summaries distort details.

Old failures are forgotten.

Result: The agent repeats mistakes.

Fix:

Structured policy files

Persistent “lessons learned”

Periodic consolidation jobs

4.4 Safety Stops Instead of Shaping

If a tool is blocked, the agent just fails.

True RSI would:

Internalize the constraint

Update strategy

Avoid the violation next time

Safety errors should become learning signals, not dead ends.

5️⃣ Security Implications of Self-Editing Agents

Self-modification + system access = serious risk.

🚨 Expanded Attack Surface

Each new script or tool increases exposure.

🚨 Prompt Injection = Code Injection

If task context and self-editing context are mixed, a malicious web page could effectively install backdoors.

🚨 Privilege Escalation

With shell + network access, a compromised agent could:

Modify allowlists

Reconfigure endpoints

Persist malicious startup scripts

🚨 Audit Loss

If the agent edits its own logs, forensics become unreliable.

6️⃣ Hardening an OpenClaw-Style System

To explore self-improvement safely:

🛡️ Enforce Least Privilege

Containerized tools

Restricted file access

Network isolation

📦 Treat Skills as Third-Party Code

Versioning

Signatures

Rollback support

🧩 Separate Duties

Different processes for:

Planning

Execution

Self-editing

📜 Immutable Logs

Cryptographically verifiable change history.

7️⃣ So… Is OpenClaw an RSI System?

Short answer:

Not in the strict AGI sense.

It supports:

Environmental self-rewiring

Tool growth

Memory-based adaptation

But it lacks:

Autonomous model redesign

Systematic selection pressure

Compounding algorithmic self-optimization

What it is:

An early experimental architecture that hints at RSI — without achieving closed-loop recursive intelligence growth.

It is better described as:

A self-configuring agent runtime with limited recursive characteristics.

Final Thought

The illusion of RSI often comes from visible growth in tools and memory.

True RSI would require:

Structured evaluation

Separated self-modification channels

Formal objectives

Secure change management

And eventually, model-level redesign

OpenClaw sits at the frontier between:

Adaptive orchestration

and

Genuine recursive self-improvement.

That boundary — not the hype — is where the real architectural work begins.