Artificial Intelligence doesn’t always need to start from zero.

One of the most powerful techniques in modern deep learning is Transfer Learning — a method that allows models to reuse previously learned knowledge to solve new, related problems faster and more efficiently.

Instead of training a neural network from scratch, we build on top of existing intelligence.

Let’s break it down.

🧠 What is Transfer Learning?

Transfer learning is a machine learning technique where a model trained for one task is reused as the starting point for a second, related task.

In simple terms:

Don’t reinvent the wheel — improve it.

Pretrained models already understand general patterns like edges in images or sentence structures in text. We reuse this knowledge and adapt it for a new problem.

Why It Matters

⏳ Saves training time

💻 Reduces computational cost

📊 Improves performance with small datasets

🔥 Boosts real-world AI efficiency

🌍 Where Is Transfer Learning Used?

Transfer learning is widely used in:

Image Classification

Object Detection

Natural Language Processing (NLP)

Speech Recognition

From detecting objects in photos to powering chatbots and voice assistants — transfer learning is everywhere.

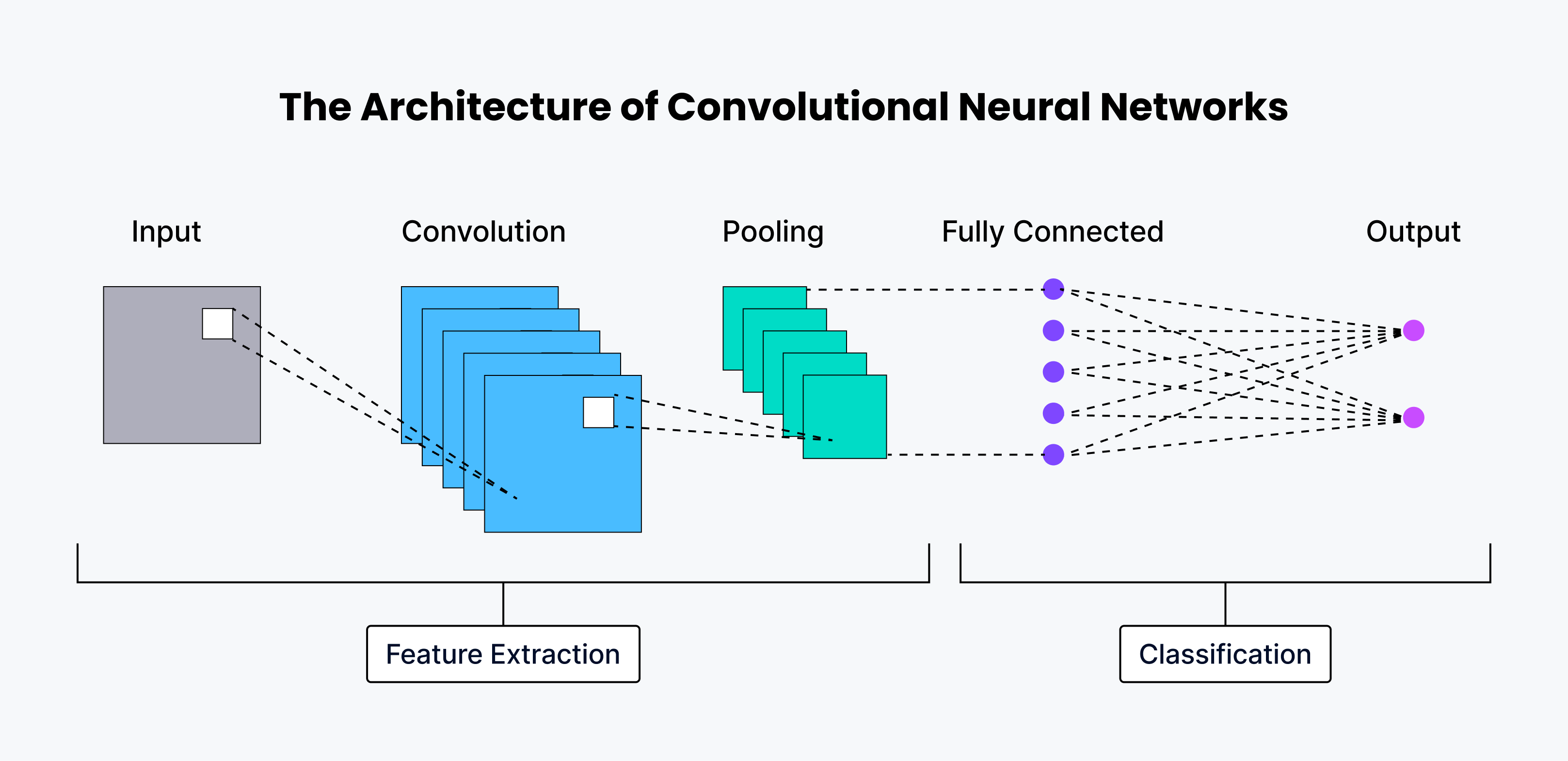

⚙️ How Transfer Learning Works

Transfer learning happens in two key stages:

1️⃣ Pre-Training: Learning the Basics

In the pre-training phase:

A model is trained on a large dataset (e.g., ImageNet).

It learns general patterns:

Edges

Textures

Shapes

Object structures

These features become reusable knowledge.

Think of this like learning basic math before solving physics problems.

2️⃣ Fine-Tuning: Adapting to a New Task

Once pre-trained, the model is adapted to a smaller, specific dataset.

This step is called fine-tuning.

There are two main approaches:

🔒 Freezing Layers

Early layers remain unchanged.

Only later layers are trained on the new dataset.

Works well because early layers capture general features.

🔓 Unfreezing Layers

Some earlier layers are also updated.

Allows deeper adaptation to the new task.

Useful when tasks are closely related but not identical.

🎯 When Should You Use Transfer Learning?

Transfer learning shines in specific scenarios:

1️⃣ Limited Data

If your dataset is small:

Training from scratch may cause overfitting.

Transfer learning improves performance using prior knowledge.

Perfect for startups, research projects, and niche problems.

2️⃣ Similar Domains

Transfer learning works best when tasks are related.

Examples:

A model trained on cats vs. dogs → classify dog breeds

A large language model → fine-tuned for sentiment analysis

The more similar the domains, the better the results.

3️⃣ Time & Resource Constraints

Training deep networks from scratch requires:

Powerful GPUs

Large datasets

Significant time

Transfer learning reduces training time dramatically — making it practical for real-world applications.

4️⃣ Better Performance

Transfer learning often improves:

✅ Accuracy

✅ Stability

✅ Generalization

Because the model already understands meaningful patterns, it performs better than a freshly trained small model.

🏁 Final Thoughts

Transfer learning is one of the most impactful techniques in modern deep learning.

By reusing pretrained models and fine-tuning them for new tasks, we can:

Save time

Reduce computational cost

Improve performance

Build smarter AI systems faster

In a world where data and compute are valuable resources, transfer learning is not just efficient — it’s essential.