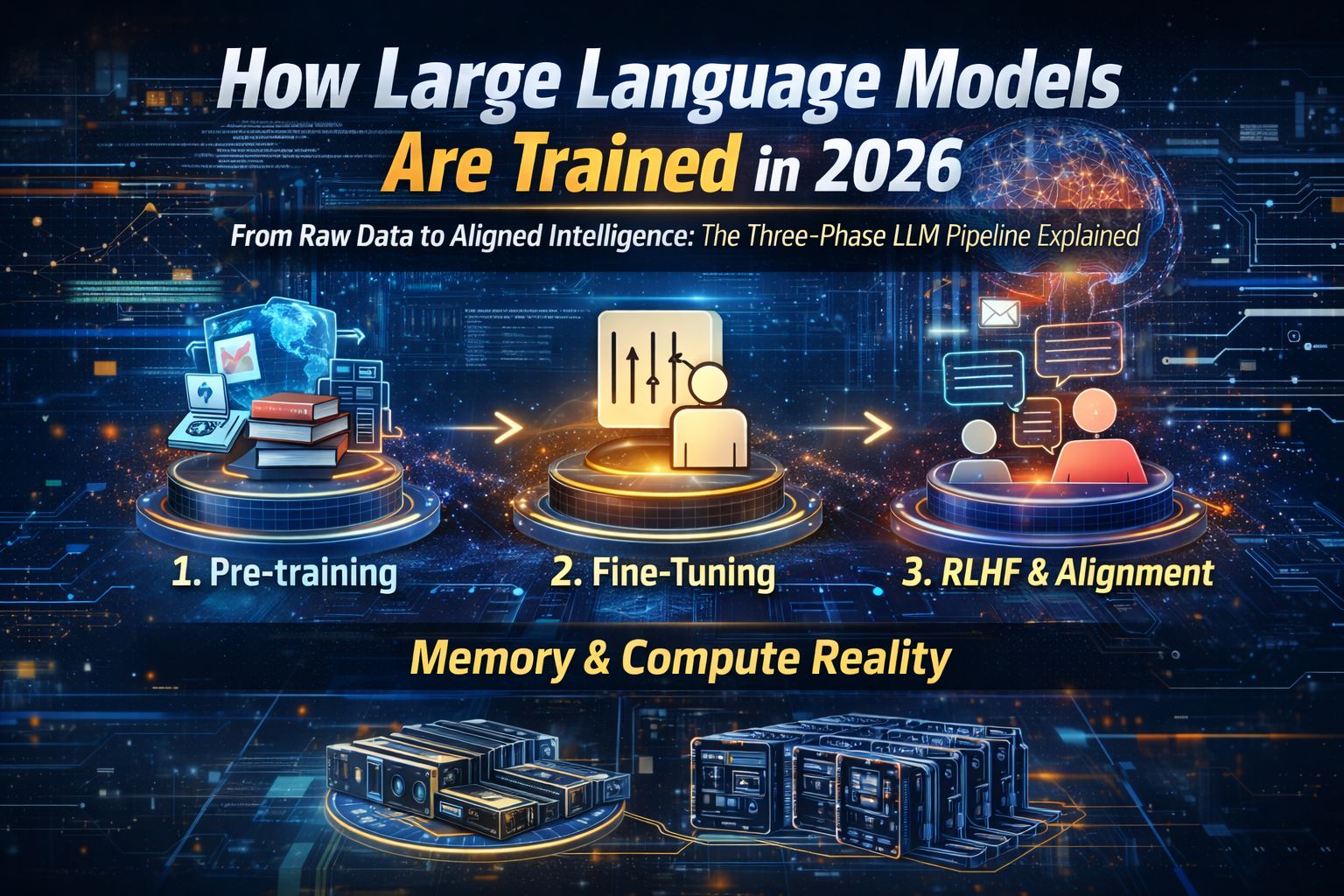

From Raw Data to Aligned Intelligence: The Three-Phase LLM Pipeline Explained

Training a Large Language Model (LLM) isn’t just about feeding it data. It’s a carefully staged engineering process that transforms trillions of raw tokens into structured reasoning systems.

As of 2026, the industry has largely standardized a three-phase training pipeline:

Pre-training

Supervised Fine-Tuning (SFT)

Alignment via RLHF

Let’s break it down clearly and technically — without losing the big picture.

Phase I: Pre-Training — Building the Foundation

Pre-training teaches a model two fundamental things:

What language is

How patterns in the world relate to each other

Data at Trillion-Token Scale

Models are trained on:

Web data (e.g., Common Crawl)

Books and academic papers

Code repositories (e.g., GitHub)

Curated high-quality corpora

Modern scaling laws (notably Chinchilla-style compute optimality) suggest:

For a fixed compute budget, scale parameters and data proportionally.

Many early models were undertrained relative to their size. Modern systems correct this.

The Core Objective: Next-Token Prediction

At its heart, pre-training is statistical pattern learning.

Given a sequence:

x1,x2,…,xn

The model predicts:

P(xn+1∣x1,…,xn;θ)

This is self-supervised learning — no manual labels required.

Architecture: The Transformer

Most modern LLMs use decoder-only Transformer architectures.

The key innovation: Self-Attention

Self-attention allows the model to:

Weigh relationships between distant words

Capture long-range dependencies

Build contextual representations dynamically

Without this mechanism, scaling to billions of parameters would not work.

Phase II: Supervised Fine-Tuning (SFT)

A pre-trained model is essentially a powerful autocomplete engine.

It knows language — but it doesn’t reliably follow instructions.

SFT transforms it into an assistant.

Instruction Tuning

The model is trained on high-quality prompt-response pairs such as:

“Summarize this article”

“Explain quantum mechanics simply”

“Write a Python function to sort a list”

Unlike pre-training:

Dataset size: Thousands to millions (not trillions)

Objective: Align output format and conversational style

This narrows the output distribution toward useful responses.

Phase III: Alignment via RLHFEven after SFT, models may:

Hallucinate facts

Produce biased content

Provide unsafe answers

Reinforcement Learning from Human Feedback (RLHF) addresses this.

RLHF Workflow

1️⃣ Sampling

The model generates multiple answers to a prompt.

2️⃣ Human Ranking

Evaluators rank responses.

3️⃣ Reward Model (RM)

A separate neural network predicts preference scores.

4️⃣ Policy Optimization

The main model is fine-tuned using reinforcement learning (often PPO) to maximize the reward score.

RLHF improves:

Helpfulness

Harmlessness

Instruction compliance

But it does not eliminate hallucinations.

Hardware & Compute Reality (2026)

Training modern LLMs requires massive distributed infrastructure.

Model Size | Precision | VRAM (Training) | Hardware |

|---|---|---|---|

7B | FP16/BF16 | ~112–140 GB | 8× A100 (80GB) or H100 |

70B | FP16/BF16 | ~1.1–1.4 TB | 32+ H100 GPUs |

405B+ | FP8/BF16 | 6+ TB | Thousands of H100/B200 GPUs |

Key insight:

Training requires 4× to 16× more memory than inference.

Why?

Because training must store:

Model weights

Gradients

Optimizer states

Activations

Step-by-Step Memory Breakdown: 7B Model (BF16 + Adam)

Let’s calculate rigorously.

A. Model Weights

7 billion parameters × 2 bytes (BF16)

Even after SFT, models may:

Hallucinate facts

Produce biased content

Provide unsafe answers

Reinforcement Learning from Human Feedback (RLHF) addresses this.

RLHF Workflow

1️⃣ Sampling

The model generates multiple answers to a prompt.

2️⃣ Human Ranking

Evaluators rank responses.

3️⃣ Reward Model (RM)

A separate neural network predicts preference scores.

4️⃣ Policy Optimization

The main model is fine-tuned using reinforcement learning (often PPO) to maximize the reward score.

RLHF improves:

Helpfulness

Harmlessness

Instruction compliance

But it does not eliminate hallucinations.

Hardware & Compute Reality (2026)

Training modern LLMs requires massive distributed infrastructure.

Model Size | Precision | VRAM (Training) | Hardware |

|---|---|---|---|

7B | FP16/BF16 | ~112–140 GB | 8× A100 (80GB) or H100 |

70B | FP16/BF16 | ~1.1–1.4 TB | 32+ H100 GPUs |

405B+ | FP8/BF16 | 6+ TB | Thousands of H100/B200 GPUs |

Key insight:

Training requires 4× to 16× more memory than inference.

Why?

Because training must store:

Model weights

Gradients

Optimizer states

Activations

Step-by-Step Memory Breakdown: 7B Model (BF16 + Adam)

Let’s calculate rigorously.

A. Model Weights

7 billion parameters × 2 bytes (BF16)

Even after SFT, models may:

Hallucinate facts

Produce biased content

Provide unsafe answers

Reinforcement Learning from Human Feedback (RLHF) addresses this.

RLHF Workflow

1️⃣ Sampling

The model generates multiple answers to a prompt.

2️⃣ Human Ranking

Evaluators rank responses.

3️⃣ Reward Model (RM)

A separate neural network predicts preference scores.

4️⃣ Policy Optimization

The main model is fine-tuned using reinforcement learning (often PPO) to maximize the reward score.

RLHF improves:

Helpfulness

Harmlessness

Instruction compliance

But it does not eliminate hallucinations.

Hardware & Compute Reality (2026)

Training modern LLMs requires massive distributed infrastructure.

Model Size | Precision | VRAM (Training) | Hardware |

|---|---|---|---|

7B | FP16/BF16 | ~112–140 GB | 8× A100 (80GB) or H100 |

70B | FP16/BF16 | ~1.1–1.4 TB | 32+ H100 GPUs |

405B+ | FP8/BF16 | 6+ TB | Thousands of H100/B200 GPUs |

Key insight:

Training requires 4× to 16× more memory than inference.

Why?

Because training must store:

Model weights

Gradients

Optimizer states

Activations

Step-by-Step Memory Breakdown: 7B Model (BF16 + Adam)

Let’s calculate rigorously.

A. Model Weights

7 billion parameters × 2 bytes (BF16)

7×109×2=14 GB

B. Gradients

Gradients also require 2 bytes per parameter:

7×109×2=14 GB

C. Optimizer States (Adam)

Adam maintains:

First moment (m)

Second moment (v)

Each stored in FP32 (4 bytes each).

7×109×8=56 GB

D. Activations

Activation memory depends on:

Batch size

Sequence length

Hidden dimension

Number of layers

For typical 7B training configs:

~28–56 GB (varies by architecture)

Total Estimated Memory

Component | Memory |

|---|---|

Weights | 14 GB |

Gradients | 14 GB |

Optimizer States | 56 GB |

Activations | ~28–56 GB |

Total ≈ 112–140 GB

This matches industry-reported figures.

2026 Verification Check

✔ Chinchilla optimality principles widely adopted

✔ FP8 training supported on H100/B200 GPUs

✔ Memory optimization via ZeRO and tensor parallelism

✔ RLHF improves safety but hallucinations persist

The frontier is now shifting toward:

Reasoning models

Chain-of-Thought prompting

Tool-augmented systems

Retrieval-augmented generation (RAG)

Final Thoughts

Training an LLM is not a single step — it is a layered refinement process:

Pre-training builds intelligence.

SFT builds usability.

RLHF builds alignment.

And behind it all lies massive distributed compute.

The next leap won’t just come from bigger models —

but from smarter training efficiency.