Rethinking AI Safety Beyond the Illusion of Oversight

As artificial intelligence systems become more autonomous, one phrase keeps surfacing in policy papers, product announcements, and regulatory debates: Human-in-the-Loop (HITL).

It sounds reassuring.

If something goes wrong, a human will step in.

But here’s the uncomfortable truth:

Human-in-the-Loop is a design pattern — not a control strategy.

And treating it as a safety guarantee may be one of the biggest misconceptions in modern AI governance.

The Illusion of Oversight

In engineering, a control system maintains stability through predictable feedback loops. When something deviates, the system automatically corrects it.

Humans don’t work like that.

When you insert a human into a high-speed AI workflow, you introduce:

Fatigue

Cognitive overload

Delayed reaction time

Automation bias (trusting the machine too much)

In theory, the human supervises the AI.

In practice, the human often becomes a bottleneck — or worse, a rubber stamp.

The Rubber Stamp Problem

Imagine an AI processing 1,000 financial transactions per second.

It flags one for review.

The human reviewer has seconds to decide.

Are they truly auditing?

Or just clicking “Approve” to keep pace?

In fast-moving environments like autonomous driving, this issue becomes critical. Systems that require humans to “take over” in emergencies assume immediate readiness. But research consistently shows it takes time for distracted humans to regain situational awareness.

Seconds matter. Humans are slow.

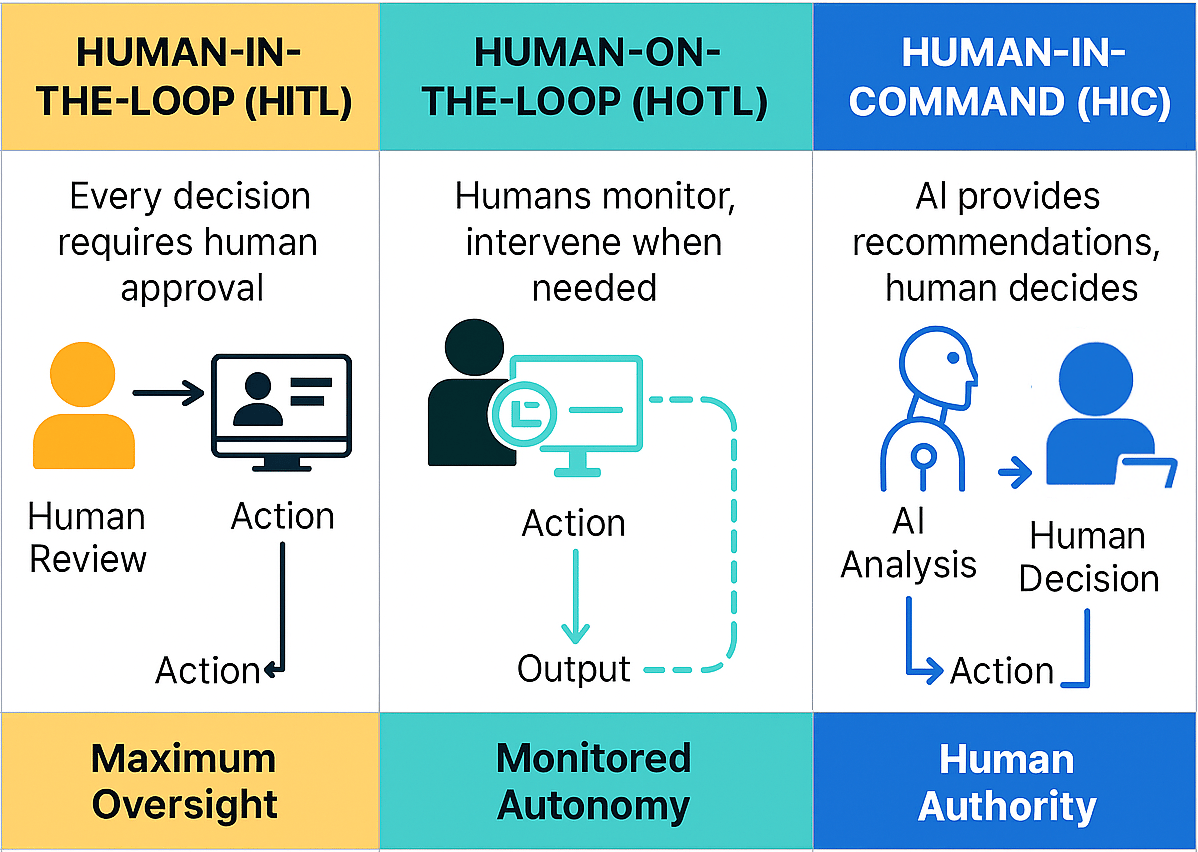

HITL vs Human-on-the-Loop vs Human-in-Command

Not all human oversight models are the same.

1️⃣ Human-in-the-Loop (HITL)

Required for every action

Creates bottlenecks

Poor scalability

2️⃣ Human-on-the-Loop (HOTL)

Oversees system

Intervenes by exception

Still limited by reaction time

3️⃣ Human-in-Command

Defines system goals and constraints

Sets boundaries in advance

Operates strategically, not tactically

Only the third model functions as a real control philosophy.

Why HITL Fails as a Safety Mechanism

There are three core failure points.

1️⃣ The Context Gap

AI systems — especially large language models — operate as probabilistic engines. When they make mistakes, the reasoning path is often opaque.

If a human doesn’t understand why the AI produced a result, they can’t meaningfully correct the underlying issue.

They’re reacting to outputs, not controlling causes.

2️⃣ Cognitive Load

Modern AI systems process:

Millions of documents

Massive sensor streams

High-frequency market data

No human can cognitively “monitor” that scale.

At some point, oversight becomes symbolic.

The human is no longer a pilot — they are a passenger reading the manual mid-flight.

3️⃣ The Responsibility Gap

When a system fails, who is accountable?

If a human was technically “in the loop” but lacked sufficient time, context, or system transparency to intervene effectively, the HITL model becomes less about safety and more about liability distribution.

It looks like control.

It functions like legal insulation.

Where Human-in-the-Loop Actually Works

HITL is not useless. It’s just often misapplied.

It excels in refinement, not real-time control.

✔ Content Moderation

Humans interpret nuance, sarcasm, and cultural context.

✔ Medical Diagnostics

AI highlights anomalies; doctors apply experience and judgment.

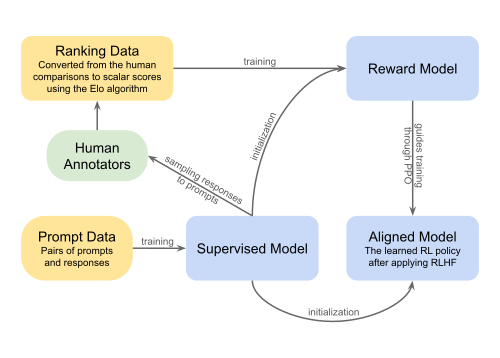

✔ Model Training (RLHF)

Humans shape preferences during development — before deployment.

The key difference?

The human is guiding the system upstream — not firefighting downstream.

The Shift Toward Guardrails

The future of AI safety lies less in human reaction and more in systemic constraints.

Emerging approaches focus on:

Hard-coded rule boundaries

Constitutional AI frameworks

Verifiable logic constraints

Access controls and layered permissions

Instead of a human watching every move, systems are being designed so certain moves are impossible.

Humans define the “what.”

Machines execute the “how.”

Final Thought

Human-in-the-Loop gives comfort because it feels intuitive.

But intuition is not infrastructure.

As AI systems move toward higher autonomy, we must transition from:

Oversight as reassurance

to

Architecture as control.

The human role is evolving — from tactical supervisor to strategic architect.

And that distinction may define whether advanced AI systems remain stable, scalable, and safe.